PrismML Launches Energy-Efficient 1-Bit LLM to Enhance AI Accessibility

Originally: PrismML debuts energy-sipping 1-bit LLM in bid to free AI from the cloud

90% Headline Accuracy

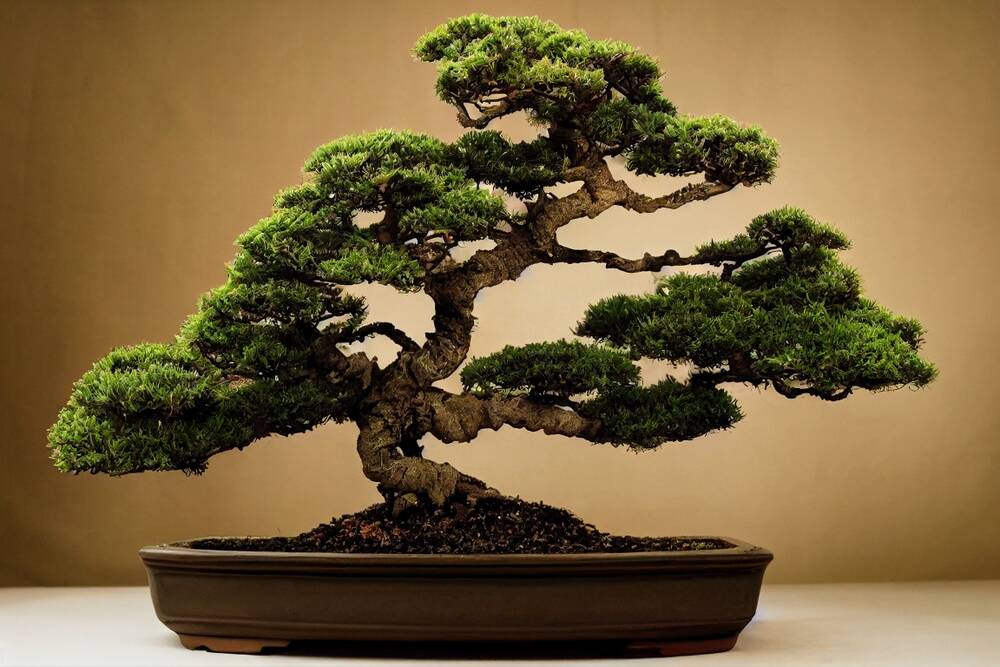

PrismML has introduced the Bonsai 8B, a 1-bit large language model that is 14 times smaller and five times more energy efficient than traditional models. The Bonsai 8B fits into 1.15 GB of memory and delivers over 10 times the intelligence density of full-precision models. CEO Babak Hassibi stated, "We see 1-bit not as an endpoint, but as a starting point," emphasizing the model's potential to revolutionize AI deployment on mobile and edge devices. The model is designed to operate natively on Apple devices and Nvidia GPUs. This innovation could significantly reduce reliance on cloud computing for AI applications.

Key Takeaways

- • Bonsai 8B is 14x smaller and 5x more energy efficient than traditional models.

- • The model delivers over 10x the intelligence density compared to full-precision counterparts.

- • PrismML's models can run on Apple devices and Nvidia GPUs, enhancing on-device AI capabilities.

- • Two additional models, Bonsai 4B and Bonsai 1.7B, are also available under the Apache 2.0 License.

- • The approach is based on research by Caltech professor Babak Hassibi and aims to redefine AI efficiency metrics.

Why This Matters

The development of the Bonsai 8B aligns with the growing trend towards edge computing, which seeks to minimize latency and reduce dependency on cloud infrastructure. As AI applications expand into mobile and real-time environments, advancements like PrismML's model could facilitate broader adoption of AI technologies across various sectors, including robotics and enterprise systems.

Headline vs. Article Context

The headline emphasizes energy efficiency, which is a key aspect of the article.

This summary was generated by AI from original reporting by The Register. Always verify important details with the original source.